My Two Cents on AI

Short NVDA

Almost all the gains in the S&P 500 this year have come from a rally in AI related stocks. These stocks now represent a very meaningful part of the index, so even if you’re an index investor it behooves you to have a view on how all of this plays out.

I’ll present my rather simplistic model below.

A caveat - none of this is an exercise in precision. My numbers are going to be off by tens if not hundreds of billions of dollars given how quickly things are moving. The goal is to form a view on where value is likely to accrue over the next three to five years and not own anything that’s egregiously overpriced and maybe find companies that are underpriced.

The Setup

To start, I think it’s worth thinking about how much will be spent on AI by consumers and businesses.

Today, we know that only 5% of OpenAI’s customers pay for the service. That’s about 50m people globally. At $250/year (assuming most are on the $20/month plan), that’s about $13B. Given OpenAI’s ARR is ~$25B today, this tracks. 50% of revenue is consumer and 50% is enterprise. Given ChatGPT has been out for a few years now, my sense is the 5% number is not going to grow meaningfully. Consumers are used to getting things for free online (paid for by ads) and very few are going to be willing to pony up $20/month for something they don’t absolutely need. If you assume consumers spend a similar amount of money on Claude and Gemini, the total consumer spend on AI today is about $50B. While this seems meaningful, we’ll see later that it’s small enough to ignore for the purposes of this exercise.

Moving on to business AI spending. As of today, based on revenue numbers from Anthropic (~$50B ARR), OpenAI and Gemini ($60B Google cloud ARR), my sense is that enterprises are spending about $75B on AI. This is a guess and it’s obviously growing quickly, but I think it’s helpful to contextualize where spend is today.

To make any of the valuations of AI companies make sense, enterprises and consumers need to start spending $1T a year on AI in the next 3-5 years and I think 90% of that spend is going to have to come from enterprises. To provide some context, all the companies in the S&P 500 in aggregate currently make an annual profit of ~$2.5T. They cannot spend $1T on AI without either having commensurate additional revenue growth (AI would need to create $8T in revenue growth at the 12% margin of the average S&P 500 company) or a reduction in costs. It’s hard for me to see how you create an additional $8T in revenue when US GDP is $30T, so I’m going to assume the spending on AI comes from job cuts. If companies lay off ten million people that make $100k a year on average, they can spend that money on AI. This doesn’t seem like a stretch. Most large companies are bloated and could easily fire 10% of their workforce and see no decline in revenue.

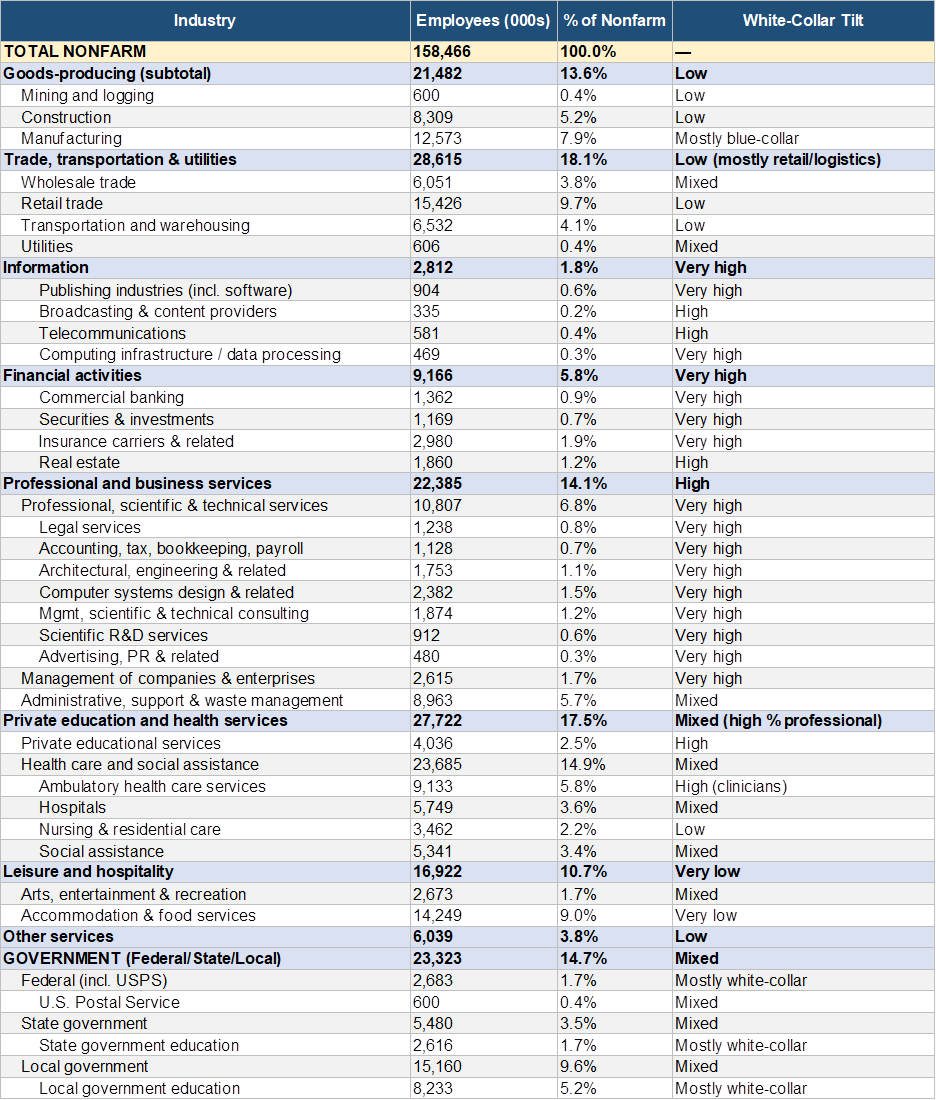

This is the starting point for the analysis. I assume in three to five years enough people will be laid off from businesses all over the world (excluding China) for companies to spend $1T on AI. You could argue this is conservative, and instead 15-20m people will be laid off, so AI spend could easily hit $2T a year. Feel free to put your assumptions into the model here. The 10m number seems reasonable to me because if you exclude sectors like healthcare, education and government jobs, there aren’t actually that many jobs that are going to be displaced by AI in the next three years. Longer term, education and governments should also shed jobs, but the nature of these employers means it probably takes closer to ten years to see a meaningful change in employment. The BLS has a great table showing employment in America by category here. I had Claude summarize it below.

My sense is the real job losses are going to come from professional and business services (about five million people laid off). I struggle to see another five million people, but maybe it’s two million from other categories and three million from Europe. European company AI spend likely accrues to US companies just like it has for tech spend for the last twenty years.

As the CEO of Databricks points out in this excellent video, we’ve already got AGI. The models don’t need to get better for them to be immensely useful in enterprise, they just need all the data and context that currently sits in employee’s heads. Employees are not necessarily going to give up that knowledge easily knowing the goal is to replace them, so replacing employees with AI is really a human and cultural challenge.

A large part of this exercise is to think through ‘steady state’ net income margins for the companies involved with AI. My view is that it’s extremely rare for companies in a competitive market to make more than a 10-15% net income margin. Uber is a good illustration. They effectively ‘won’ the rideshare battle, but despite being the dominant player for half a decade now, their true post tax net income margins are 10%. The companies that make meaningfully higher margins, like Google and Microsoft, are effectively monopolies.

The AI Stack

Let’s dive into the economics for AI companies.

Similar to Nvidia, I divide the AI stack into five layers –

1. The application layer

2. The model layer

3. The infrastructure layer

4. The hardware layer

5. The chip manufacturing layer

My mental model is that enterprise AI spending enters the top of the ‘funnel’ at the application layer with each layer extracting some profits before the remaining dollars flow to the next layer.

The Application Layer

Most corporations are going to use AI through agents made available by Anthropic, OpenAI, Microsoft, Google, Salesforce, Harvey and a whole bunch of companies that are private or don’t exist yet. This will be where the $1T in enterprise AI spending flows. A number of companies will of course hire Deloitte, Accenture and others to help them ‘implement AI’, but I ignore this for the purposes of this discussion, because the payments to these consultants will not necessarily be ongoing.

Competition in the application layer is going to be intense. Just witness the competition in the coding tool space with Claude Code, GitHub Copilot and Cursor all vying for share. Some companies (like Microsoft) have a distribution advantage, but that feels weaker than it did ten years ago given how quickly companies like Anthropic have gained adoption.

My assumption is that the application layer in aggregate makes 10% net income margins. You could argue it could be higher in steady state, especially if a handful of players end up dominating the space. I could easily see 15-20%. However, given how quickly the space is evolving, 10% seems reasonable for a 3-5 year view.

It also feels like the single biggest cost for these companies is going to be tokens, so it wouldn’t surprise me if these were 20-30% gross margin businesses with 15% of revenue in employee costs, leaving about 10% for net income. Just the salaries of salespeople alone for these products is likely to be 10% of revenue. OpenAI has grown from 1k to 5k people in a year and almost all of that growth is in the GTM function.

The Model Layer

Today, there are really three players at this layer. Anthropic, OpenAI and Gemini. While Anthropic seems to be the leader today, competition is intense and it seems like the leader is only a few months ahead of the competition at any point in time. Even ‘cheap’ Chinese model are supposedly only three to six months behind the best U.S model.

In any business where your product is obsolete / easily replicated by others in a few months, it’s going to be hard to make money. I think you have to assume that America will likely ban Chinese models based on national security, so the competition will likely be between the existing players and efforts from Microsoft, Amazon and Meta.

The current thinking of people like Satya Nadella is that the model layer will commoditize. Having used Claude and Gemini extensively, they are quite different to me and Anthropic is clearly meaningfully better, so I’m not sure how this will really evolve, but given the market dynamics its hard for me to see more than a 10% margin for these players on average.

We’re already at a stage where models are training each other, so I’d think employee costs here are minimal (I’m modeling 3% of revenue) for a pure model layer company, with the bulk of their costs going to compute (both training and inference).

The Infrastructure Layer

I define this layer as where the models run. Right now the hyperscalers (Amazon, Microsoft and Google) dominate this space, but a number of newer players like Oracle and CoreWeave are getting in on the game. For companies like Anthropic and OpenAI with tens of billions in revenue, they could easily fund the build out of their own infrastructure layer over time and cut out the hyperscalers. I therefore model revenue in this layer being equally distributed between Amazon, Microsoft, Google and the neo clouds (Oracle, Coreweave, Nebius etc.)

Given the competition, it’s hard to see this layer making more than a 10% steady state profit margin. The current hyperscalers have software that makes model deployment more efficient and gives enterprises the ability to easily bring models to their data that is already in AWS and Azure, so I assume they capture an additional 5% of margin for this activity. This would really be the markup companies are willing to pay to not move their data to another provider. The hyperscalers could also discount their other cloud offerings to entice their customers to bring their AI workloads to their clouds.

The hyperscalers also need to spend on power and people to keep the datacenters running. The running cost of power is hard to predict given it depends on the source of power, but I model 5% of revenue as the cost of people and power here.

The Hardware layer

This is where all the value from AI is currently accruing. Just look at the stock chart of any chip company over the last year.

NVIDIA alone will make close to $200B in profits this year. The three HBM companies (using MU as a proxy) will also make close to $200B in profits. Given the revenue in the model layer today is only ~$100B, this is clearly not sustainable. Even if total enterprise AI spending gets to $1T, I can only see ~$500B flowing into the hardware layer.

With agents expected to be the primary way that enterprises leverage AI, the current thinking is that CPUs will be just as important as GPUs, so it’s anyone’s guess how this $500B of revenue flowing into the hardware layer is allocated across chips, memory, storage and networking in steady state.

All the hyperscalers have their own CPU chips and Google and Amazon have their own GPU equivalents. Microsoft is also working on their own inference chips.

You can look at my assumptions for how the ~$500B in revenue is distributed across the hardware layer as well as the margins for this layer. Note the margins are much lower than the margins in the most recent quarter for companies like Micron and Nvidia, but my assumption is that increasing competition and supply mean that margins will normalize over time. We also need to think about steady state spending on hardware vs spending today during the buildout phase.

The Manufacturing Layer

The great irony for the chip layer is that pure play chip companies are really software companies. The employees at Nvidia, AMD and Sandisk use software to design chips. The designs are sent to companies like TSMC in Taiwan and Kioxia in Japan where the chips are manufactured. In some sense, these companies have a lot more leverage than the chip design companies because it’s much harder to set up a fab than to hire a few chip design engineers to make chips for you.

For this reason, I assume companies at this layer make 50% net income margins for the next three to five years.

Conclusions

Many companies play across multiple layers of the AI stack. Google is the best example. They have the frontier model with Gemini, the datacenter and their own chips. My spreadsheet is setup to disaggregate these different pieces so they can be valued separately. I assume a 25x multiple to net income across the board because topline likely grows at 10-15% for the next ten years.

With regard to the hyperscalers here is my quick and dirty math for what they’re worth -

Google – $5T → 2.5-3T for existing ad biz, 1T for the datacenter and chip biz, 1T for the model biz

Amazon – $3T → 1T for retail, 1T for AWS (excluding AI), 1T for datacenter and chip biz

Microsoft – $3T → 2.5T for existing software biz (assuming it holds up), 0.75T for data center and chip biz

The market seems to agree, so these are all fairly valued today. You could argue they’re priced to perfection and if it takes longer than three years to get to $1T in enterprise AI spend, they could have downside. Google seems the most exposed to downside as half their value is from anticipated AI spend vs about 1/3rd for Amazon and Microsoft.

Chips design companies like Nvidia, AMD and Sandisk seem the most overvalued and could easily be down 50% in a year or two. If you must have exposure to the chip stack, TSMC seems like a much better way to express this view.

Notes

Today a lot of the ‘spending’ on AI is circular. The hyperscalers are spending $250B on Nvidia chips in anticipation of demand. NVIDIA is taking their $150B in cash profits and investing it in companies like OpenAI, which then spend on compute from the hyperscalers. At some point, that $150B from Nvidia needs to be replaced by corporations spending similar amounts of money. Given the growth in Anthropic’s revenue this doesn’t seem like too much of a stretch.

The loss of white collar jobs isn’t likely to be that dramatic over the next five years, so seat based software companies with complex, hard to replace software should actually be ok.